If you're wondering why trans people have no use for the Just Asking Questions approach toward their medical care taken by so many online contrarians, well here you go: Amid.

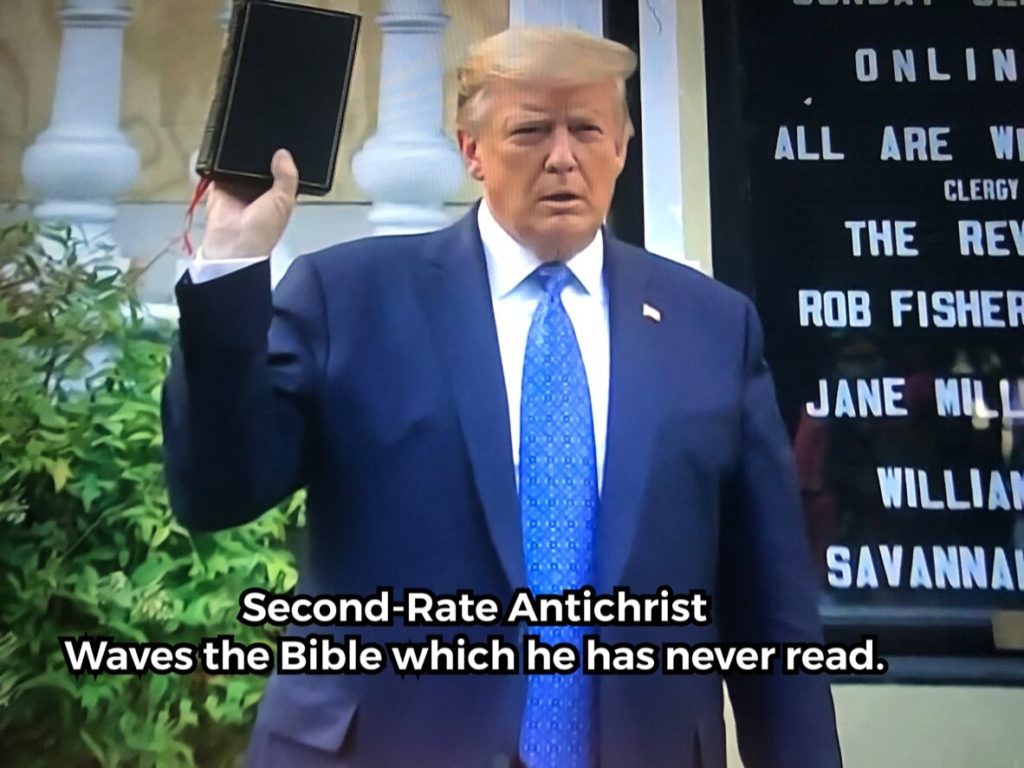

The medieval historian Jay Rubenstein asks the question! The Antichrist associated with this new, literal Babylon was expected to be Jewish. There’s nearly always an anti-Judaic element in Christian apocalyptic..

So Matt Gaetz decided to take his case to the public tonight and, um, yeah: Full video thread of Matt Gaetz's bonkers appearance on Tucker Carlson Tonight -- one Carlson.

For tonight's film, let's watch Martin Luther King's 1967 speech at Stanford. Titled "The Other America," here he laid out his late-life quest to fight poverty in a cross-racial campaign.

Sometimes people are as creepy as they look, apparently: Representative Matt Gaetz, Republican of Florida and a close ally of former President Donald J. Trump, is being investigated by the.

When nihilists like Greg Abbott prematurely lift and preempt mask mandates, they put ordinary minimum wage workers who don't want to be exposed do a deadly virus in an incredibly.

This seems like a big deal. https://twitter.com/Travis_Waldron/status/1376927998520221698 The link is in Portuguese and Google Translate isn't as helpful as you'd like, but this seems quite major. Here's a WaPo piece.

The AFL-CIO's Solidarity Center has a report out about forced labor from Turkmenistan in the cotton supply chains. It's a pretty bloody awful story. Cotton bound for global markets from.

- We’lL be Greeted as lLiberators! Thank yo for your attention to this matter!!!

- I would like to give all glory to God for hitting this four-team teaser parlay

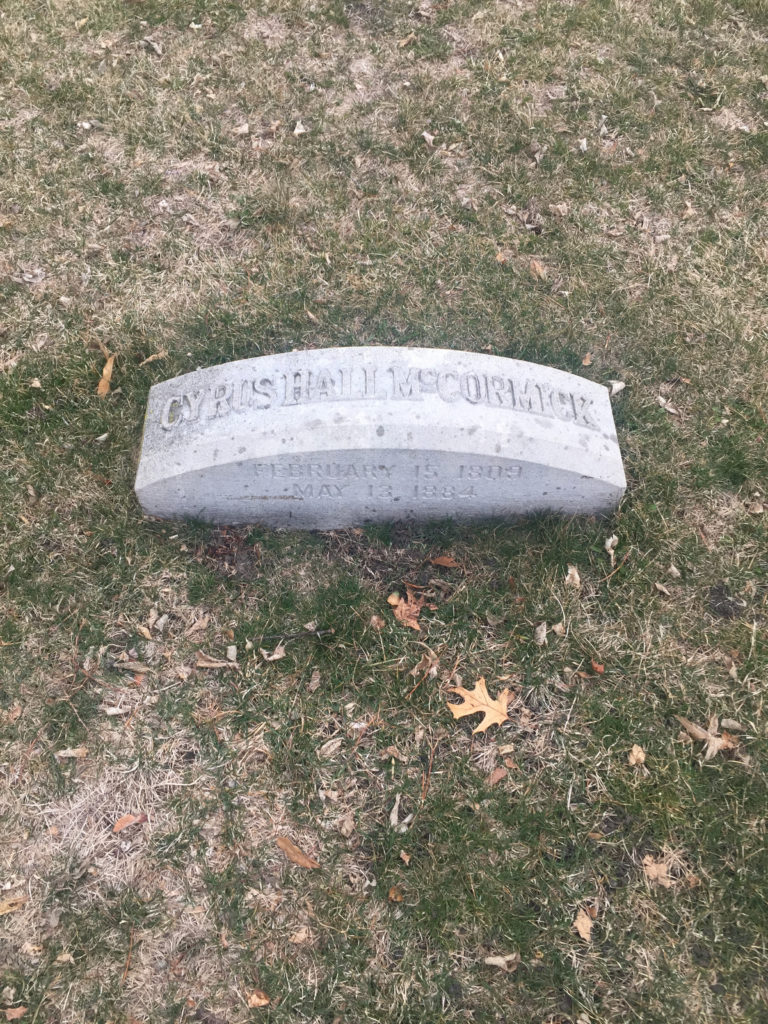

- Erik Visits an American Grave, Part 2,051

- Turning tail

- 2025 Year in Review

- Tired old man ignoring the advice of his doctors

- Did I make this one up?

- CFB Playoff Thread

- What would happen if you made a mobbed up grifting malignant narcissist president of the United States?

- December Reading List