Author: Paul Campos

Of the action: President Donald Trump's "Board of Peace" gathered in Washington on Thursday for its long-awaited first meeting, with the next stage of the fragile ceasefire in Gaza in focus. It was an event.

British police on Thursday arrested Andrew Mountbatten-Windsor, [the pedophile] formerly known as Prince Andrew, over suspicions of misconduct in public office after accusations that he shared confidential information with Jeffrey.

This (gift link; h/t commenter Shirley0401) looks like a really interesting oral history of the reaction of people in and around the Obama administration to Trump's win in 2016. Oral.

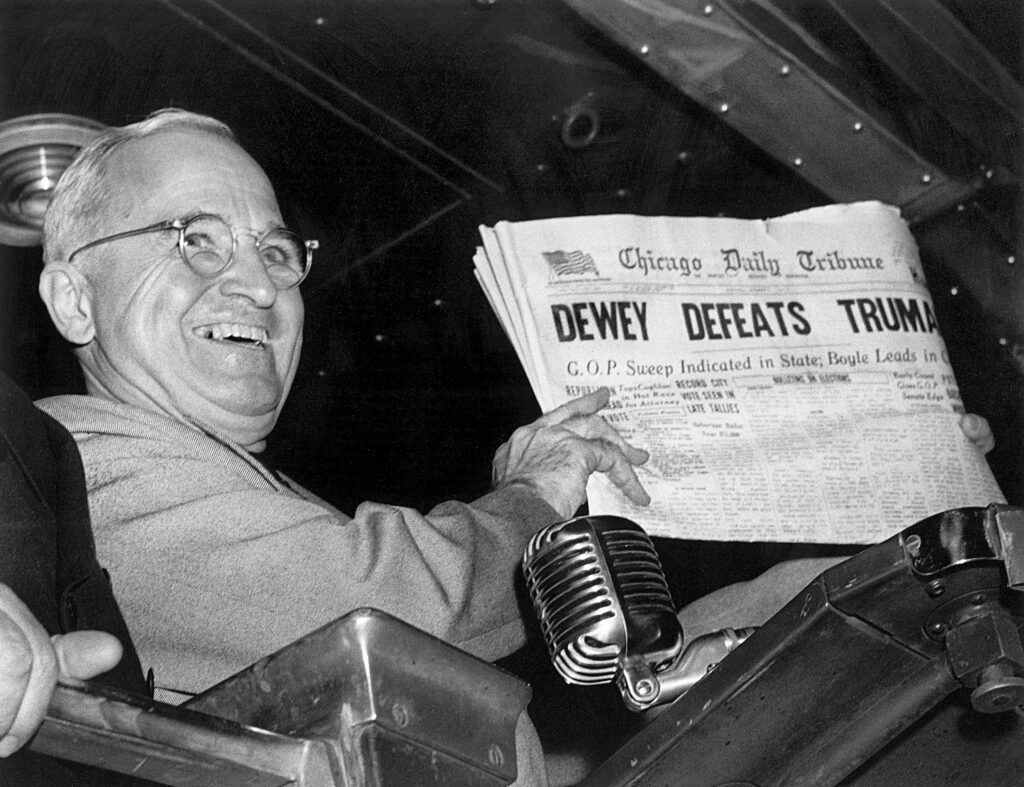

FILE - In this Nov. 4, 1948, file photo, President Harry S. Truman at St. Louis' Union Station holds up an election day edition of the Chicago Daily Tribune, which.

That this is now a successful career strategy tells you all you need to know about Donald Trump, MAGA, and the Republican party (three terms for exactly the same thing.

Except he can't, because the malignant narcissism leads to, among other things, skipping the whole consciousness of feeling oneself slipping stage: https://twitter.com/HoopsCrave/status/2023099693039812930 Now what could possibly have triggered something as.

While I've found various AI cheerleaders' propaganda about the capabilities/ontology of Large Language Models to be absurdly exaggerated, I'm a lot more worried about this kind of thing: Is seeing.

What's the hyperbole in this statement? https://bsky.app/profile/ianbassin.bsky.social/post/3metfz5qd6k2k There is none. The CIC of the armed forces telling members of the military who they have to vote for -- in ordinary.